The New Units of Economics in Software Engineering Are Undecided

But the Old Ones Are Obsolete

The frameworks we use to measure software engineering — story points, sprint velocity, team headcount, Flow Load — were built to manage the cost of human coordination. That cost is changing. This article argues that the team unit itself has changed: the modern high-output engineering team is a hybrid of human and agent nodes, and the composition is determined by the project, not prescribed by a framework. At the micro level, this article introduces the

+operator — the structural shift that separates the hybrid team from its predecessors — and proposes five candidate metrics for measuring what actually matters in this new model. At the macro level, it argues that lowered barriers to entry are accelerating competitive dynamics in ways that make the "save headcount" response not just insufficient but strategically dangerous. The new units are undecided. The organisations defining them are already operating without them.

There’s a version of this article that leads with a provocative number — how many fewer engineers companies will hire, how many jobs will disappear, how the industry is about to contract. I’m not writing that article. Not because it’s wrong to ask those questions, but because I think it’s asking the wrong ones.

AI coding agents have slashed the entry barriers to building serious software. Very small teams can now deliver enterprise-grade products — new software, innovative takes, new waves of disruption in what Geoffrey Moore would recognise as the chasm-crossing, tornado-riding zones of innovation [2]. That’s why, for engineering leaders, the question shouldn’t be how do I save headcount. It should be what can I do with the capacity I’ve just been handed. The frame of subtraction — fewer engineers, lower costs, smaller teams — misses the actual opportunity entirely. The frame that matters is addition: more projects, more bets, more surface area, more ambition.

The old units — headcount, sprint velocity, story points, team size — were built for a different constraint. That constraint is gone. We don’t yet have agreed replacements. That gap is what this article is about.

What I Actually Work With Now

The best way I can explain what has changed is to describe a few moments where the old assumptions visibly broke.

The first was inside an ongoing migration project at work. Complex domain logic accumulated over years — the kind of work where the cognitive load alone is the bottleneck: understanding the existing patterns, the edge cases, the implicit decisions baked into a system that had grown beyond any single person’s ability to hold fully in their head. The project had been running for some time with meaningful complexity still ahead. Not because the team wasn’t capable — because this class of problem is genuinely hard to transfer between people, and the ramp time for each new contributor compounds the delay.

An agent was brought in. Half a day to surface the patterns with guidance. Eighty to ninety percent accurate transformations from that point forward. A project that had been moving slowly is now on track to complete this quarter.

That’s not a productivity improvement. That’s a phase change. And it is not unique. Across the industry, agents are being applied to exactly these classes of problem — deep legacy code, complex domain logic, accumulated institutional knowledge — with consistent results. The COBOL story covered later in this article is the same pattern at more dramatic scale.

The second was a deliberate research experiment: could the same approach work in a domain with zero prior experience? Mobile development, game mechanics, Flutter — none of it familiar territory. The goal was a multiplayer game, which meant state synchronisation, real-time logic, platform-specific constraints, and a stack I had never written a line of. The setup mattered as much as the tools: one AI instance acting as architect, producing structured task cards against the spec. Separate coding agents working in parallel, each scoped to a distinct part of the monorepo. A root agent validating every output against the full codebase before anything merged — a hallucination check built into the workflow itself. The research concluded with a shippable multiplayer game. New domain, new stack, new class of problem — the ramp that should have taken months compressed into weeks.

There is nothing special about this experiment. Any mid-to-senior engineer could run the same setup and reach the same outcome. Many are. According to Carta data, solo-founded startups have risen from 23.7% in 2019 to 36.3% by mid-2025 — and the acceleration precisely coincides with the mainstreaming of AI coding assistants and agentic tools [10]. The minimum viable team is shrinking not because founders are exceptional, but because the tools changed the constraint.

The third experiment had nothing to do with software. With no relevant domain skill — none — I produced four original music albums. Published. Live. Accumulating streams. The only thing I contributed that couldn’t be assisted was the origin: a specific life, a specific experience to imprint, drawing on lived experience in a way no agent can replicate. The craft of realising it, which had always been the barrier, was no longer the barrier.

Jensen Huang put a version of this plainly at Computex in 2023: “The programming barrier is incredibly low. We have closed the digital divide. Everyone is a programmer now — you just have to say something to the computer.” [9] The music experiment is that argument applied beyond software. The AI handled the manual execution layer. The human provided the only thing that can’t be generated: genuine experience and the intent to express it. This pattern — expert provides direction, agent provides craft — will replicate across domains far beyond engineering.

These examples sit across a spectrum — from enterprise migration work to solo research — but they point at the same thing. The software engineering landscape has changed massively. The question is whether the surrounding techniques, overhead, and management practices have changed with it.

Enterprise software is not solo experimentation. It carries compliance, coordination, stakeholder alignment, legacy decisions, and organisational inertia that no agent eliminates. So what does the new model actually look like when it has to operate inside those constraints — and has the industry even begun to answer that question?

The Scrum Team Was a Solution to a Problem

Every framework built around how humans work is ultimately built around how humans think. And human thinking has a hard constraint at its foundation.

In 1956, cognitive psychologist George Miller published what became one of the most cited papers in psychology: The Magical Number Seven, Plus or Minus Two [8]. His finding: the average person can hold approximately 7 ± 2 items in working memory at once. Later research by Cowan refined this further — the actual capacity for novel, unrelated information is closer to 4 chunks [8]. Beyond that limit, cognitive load overwhelms processing capacity. Information stops flowing in and starts getting dropped.

This isn’t an edge case of human cognition. It is human cognition. And it is the invisible foundation beneath every framework, ceremony, and team design principle in software engineering. Scrum’s team size limits, its 15-minute standup timeboxes, its sprint ceremonies — all of it is ultimately load management. The frameworks exist because humans can only hold so much, and distributed work across a team multiplies what needs to be held.

The 2020 Scrum Guide defines the ideal team as “typically 10 or fewer people,” noting that smaller teams communicate better and are more productive [3]. Earlier versions of the guide were more precise: 3 to 9 developers, with the empirical sweet spot at 7 ± 2 [4] — a number that traces directly back to Miller’s 1956 paper [8].

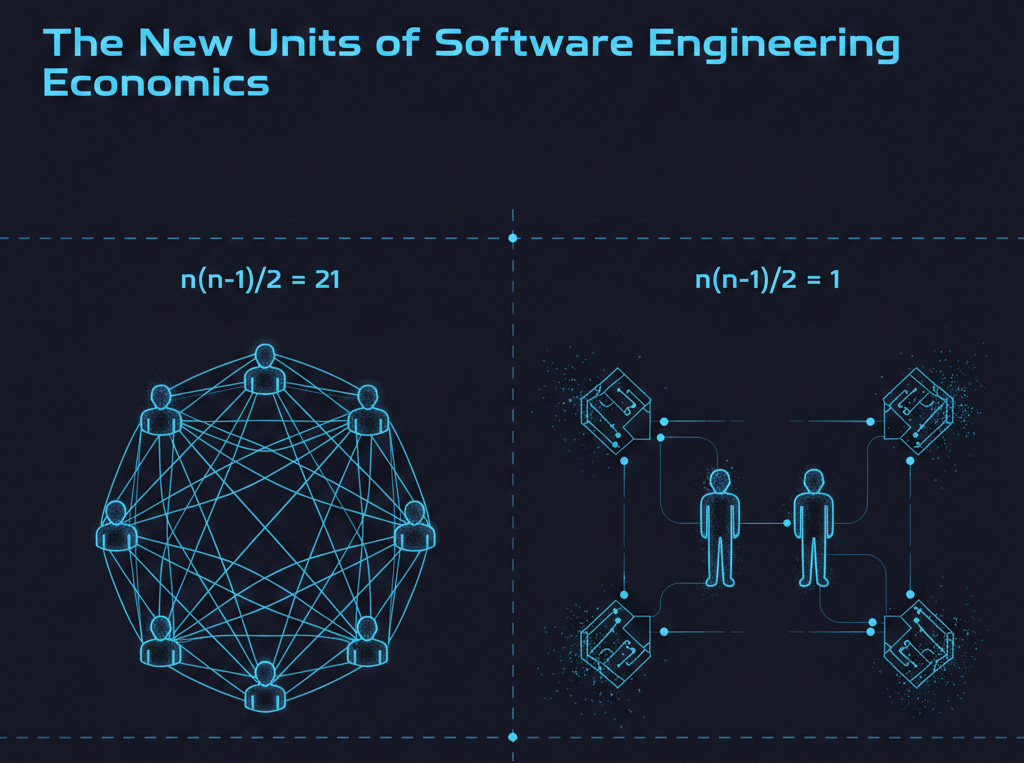

The reasoning behind the upper limit is mathematical, not arbitrary. Interpersonal links between team members don’t grow linearly. The formula is n(n-1)/2: a team of 9 has 36 relationships to maintain; a team of 15 has over 100 [5]. Every link is coordination overhead — context to share, alignment to reach, trust to build. Scrum’s ceremonies exist to manage those links. Standups, retrospectives, sprint planning — they are the maintenance cost of human collaboration at scale.

Below the minimum, the problem inverts. Teams of 2 or 3 lack diversity of thought, struggle with skill coverage, and show measurably reduced quality [6]. The framework assumes a floor as well as a ceiling.

This is the constraint that shaped everything: team size, ritual design, delivery cadence, estimation practices. All of it is load-bearing against the same underlying problem — distributed cognition across a group of humans.

Now consider the 2-human + 4-agent team through the same mathematical lens. Agents don’t generate interpersonal links in the way humans do. They don’t need alignment meetings. They don’t carry context between sessions that needs to be re-shared. The n(n-1)/2 coordination curve — the very formula that makes large teams inefficient and drives the 10-person ceiling — changes shape entirely. A team of 2 humans has exactly 1 interpersonal link to maintain. The agents extend capability without extending the coordination surface.

At enterprise scale, the problem compounds. When multiple Scrum teams work toward the same product, organisations reach for Scrum of Scrums — a coordination layer above the team layer — and frameworks like SAFe to manage the dependencies between them. Dr. Mik Kersten’s Flow Framework, introduced in Project to Product in 2018, was designed to bring measurement rigour to exactly this context [7]. It introduced five metrics to track value delivery across product value streams: flow velocity, flow time, flow efficiency, flow distribution, and — the one most directly tied to cognitive load — flow load. Flow Load tracks the number of items currently in progress across a team or value stream; high WIP drives context switching, reduces throughput, and over time increases attrition risk [7]. It became the enterprise-scale proxy for the question: is this team overwhelmed?

These frameworks were built for a world where coordination overhead was the dominant constraint on delivery speed. They are sophisticated, well-researched responses to a genuine problem. But they are also instrumentation built around the assumption that the team unit is human — that the n(n-1)/2 link growth applies, that cognitive load distributes across people, that WIP limits matter because human attention is finite and non-parallel.

Agents change several of those assumptions simultaneously. An agent doesn’t context-switch the way a human does. Flow Load as a metric was designed to protect humans from overload — but what is the equivalent measure for a team where most of the execution capacity isn’t human? We don’t have an answer yet. That’s exactly the gap this article is pointing at.

The knowledge transfer assumption deserves particular attention, because the evidence here is the most dramatic. Anthropic recently published a post showing Claude Code can map dependencies across a COBOL codebase, surface risks, and document workflows that “would take human analysts months to surface” [1]. COBOL — built in 1959, running 95% of ATM transactions in the US, its original authors long retired, its institutional knowledge carried out the door with them. For decades, modernising a COBOL system meant years of specialist consulting engagements because, as Anthropic put it, “understanding legacy code cost more than rewriting it. AI flips that equation.” [1] An agent read the codebase. It mapped what no living person fully understood anymore. Domain Ramp Time — one of the candidate new units proposed later in this article — didn’t compress. It collapsed.

A Proposal: The Human + Agent Team

If the Scrum team was the answer to a coordination problem, and the coordination problem has fundamentally changed shape — then the team unit needs to change with it.

Here is the proposal: the modern engineering team is a hybrid of human and agent nodes. The composition is not prescribed — it is determined by the project, the domain, and the capability of the agents available. A solo engineer with four specialised agents. Two humans with six. Three with twelve. The ratio is fluid. What is not fluid is the structural shift the + operator represents: the team now contains non-biological members, and the frameworks built assuming otherwise no longer apply cleanly.

The + is the innovation. Not the specific numbers.

What changes at the small end of the spectrum is the most significant. When the human count drops below the old Scrum minimum — roughly 3 to 4 people — something fundamental happens: the n(n-1)/2 coordination curve becomes trivial. Two humans have exactly one interpersonal link to manage. One human has none. The problem Scrum was designed to solve simply does not exist at this scale, which means building Scrum on top of it is pure overhead with no corresponding benefit.

2+4 is an illustration of this minimum viable hybrid team — not a prescription. It represents the point where human coordination overhead collapses to near zero while agent capacity is large enough to carry serious workstreams in parallel. It is a useful mental model, not a staffing formula.

The transition back matters too. As the human count grows — as a project scales, as organisational complexity increases, as more stakeholders enter the picture — the coordination problem re-emerges. At some threshold, the old Scrum dynamics become relevant again. The human links multiply, context starts to fragment, ceremonies start earning their overhead. The frameworks don’t become obsolete at enterprise scale; they become obsolete specifically at the small end where they were always weakest anyway. What the hybrid model does is extend the lower boundary of what a coherent, high-output team can look like — and that extension is where most of the interesting things are now happening.

What the 2+4 team has instead of Scrum is structure of a different kind: clear human roles, deliberate agent composition, and explicit ownership of the quality bar. The agents are not junior engineers. They are not interchangeable tools. They are collaborators with distinct capabilities that need to be orchestrated — which means the humans need to understand what each agent is good at, where it will fail confidently, and how outputs from one feed into the next. That is a skill. It is the new engineering skill.

Vertical Ownership Returns

There’s something that used to be reserved for the heroic engineer — the one who owned the entire stack, front to back, infrastructure to product. We mythologised them and also quietly resented the bus factor they created. Full vertical ownership was a liability.

It isn’t anymore. With agents handling the execution layer across domains, one person can genuinely own a feature end to end — not by being a 10x engineer, but by having capable collaborators across every layer of the stack. The mobile research experiment described earlier is a direct example: a domain I had never touched, a stack I had never written, a shippable product at the end. Not because the engineering boundaries disappeared — but because the barrier of domain unfamiliarity stopped being the constraint.

This changes the relationship with work. Ownership isn’t just a responsibility allocation in a JIRA board. It’s the feeling of being able to trace a problem from the user to the infrastructure and back. That feeling — and the judgment it produces — is back on the table for more people.

The Senior and the Junior

The senior engineer’s job changed more than the junior’s. That sounds counterintuitive, but I think it’s true.

The junior in the 2+4 model has a permanent pair-programming partner that never loses patience, never condescends, and gives instant feedback on every line. The learning surface is enormous. You can experiment faster, fail safely, and build intuition at a rate that wasn’t possible before. The junior who embraces this model will compound faster than any junior cohort in the history of software development.

The senior’s shift is harder to name. Less coding — obviously. But it’s not just that. It’s the move from executing to orchestrating. The senior’s primary value is now judgment: knowing when the agent is confidently wrong, when the architecture it’s proposing will cause pain in six months, when the elegant solution is actually the brittle one. You can’t outsource that. It’s the residue of years of being burned by exactly those decisions.

The senior also sets the taste of the team. What quality looks like. What good enough means. What needs to be built properly versus what can be scaffolded fast and revisited. Agents execute to spec. The senior defines the spec — and knows when the spec is the problem.

The Assumptions That No Longer Hold

Scrum was built on a set of assumptions that made sense when they were written. Most of them don’t hold anymore.

Assumption: Knowledge transfer takes time. It does, between humans. Agents don’t need onboarding the same way. You describe the context, the codebase, the constraints — and you’re working. Not in weeks. In minutes.

The clearest proof of this just played out in public. Anthropic published a blog post showing Claude Code can map dependencies across a COBOL codebase, surface risks, and document workflows that “would take human analysts months to surface” [1]. COBOL — a language built in 1959, running 95% of ATM transactions in the US, powering the core of global banking and government systems. The developers who built those systems retired decades ago. The institutional knowledge left with them. Universities stopped teaching the language. For years, modernising a COBOL system meant hiring armies of specialist consultants for multi-year engagements, because — as Anthropic put it — “understanding legacy code cost more than rewriting it. AI flips that equation.” [1]

An agent read the codebase. It mapped what no one alive fully understood anymore.

This isn’t an edge case. It’s the extreme version of something happening everywhere: the cognitive load of onboarding — of getting a human up to speed on a complex system — is collapsing. Not gradually. Rapidly. The assumption that ramping into a new domain takes months was never really about the domain’s complexity. It was about the limits of how humans transfer knowledge between each other. That constraint is dissolving.

Assumption: Parallel workstreams require parallel people. They don’t anymore, not in the same way. One human with well-orchestrated agents can carry multiple workstreams without the coordination tax that made that unworkable before.

Assumption: Estimation is hard because people are variable. People are still variable. But the variance profile changes when agents handle a large portion of execution. The unknowns compress.

I’m not arguing Scrum is dead — it still serves teams and contexts where these assumptions hold. But for a small, senior-weighted team working with AI agents, the framework is overconstrained for the actual problem.

Towards New Units

The old units are obsolete. The new ones are not yet agreed. But the questions worth asking are becoming clearer — and some candidate metrics are already visible in the practice of teams running this model today.

Cycle Depth is perhaps the most fundamental. In a hybrid human-agent team, every piece of work follows a cycle: human initiates with context and architecture, agents execute, outputs are validated, corrections applied, and the cycle repeats until the output is releasable. The depth of that cycle — the number of human-agent interaction loops required to reach releasable quality — is a direct proxy for the clarity of the human thinking that initiated it. A depth of 3 is a well-architected problem handed to well-scoped agents. A depth of 10 or more signals that something broke down upstream: unclear context, ambiguous task cards, architectural thinking that wasn’t sharp enough before execution began. Cycle Depth is not a measure of agent capability. It is a measure of human clarity. Teams that track it will find their real bottleneck.

Context Fidelity measures how well the agent architecture holds coherent understanding of the project as it scales. Small projects need one or a few agents. Large projects need specialised agents scoped to distinct parts of the codebase — with root or validating agents sitting above them to catch drift before it compounds. As projects grow, agents transition roles: a single generalist agent becomes an architect agent, dedicated coding agents, and a validation layer. Context Fidelity is expressed as a ratio: the number of coding agents to the number of validating agents, tracked alongside the number of context transitions the project has undergone. A low ratio of validators to executors on a large, complex project is a risk signal — it means confident but wrong outputs are accumulating without sufficient checking. A high number of context transitions signals that the project has grown in complexity faster than the agent architecture has adapted to it. This makes Context Fidelity a dual-purpose metric: it measures the current health of the agent setup, and it tracks complexity growth over the project’s life. It is, in other words, a complexity ramp metric that emerges naturally from how the team is structured.

Where Cycle Depth captures the quality of upstream thinking, Orchestration Overhead captures the ongoing human cost of managing agents during execution — the time spent re-prompting, correcting drift, switching context between agents, and validating mid-cycle outputs. A well-architected problem handed to poorly scoped agents will have low Cycle Depth but high Orchestration Overhead: the initiation was clean, but the agents required constant steering to execute within it. These are different failure modes requiring different fixes. High Cycle Depth means invest in better architectural thinking before execution. High Orchestration Overhead means invest in better agent design, tighter task scoping, and stronger validation layers within the pipeline.

Domain Ramp Time measures how long it takes a hybrid team to reach productive output in an unfamiliar domain. The COBOL story in this article is an extreme example of this metric collapsing — from months to hours. The mobile research experiment is another. Tracking Domain Ramp Time makes this collapse visible and creates accountability for maintaining it. It also becomes a direct measure of the team’s context-loading capability: how quickly can the human-agent system absorb a new codebase, a new domain, a new set of constraints, and begin producing reliable output.

Zones Entered Per Quarter is the macro unit — the Geoffrey Moore metric applied to the new model. Not velocity within an existing product, but the rate at which the organisation is placing new bets, entering new zones, running experiments that were previously unfundable. This is the metric that distinguishes organisations using the new capacity offensively from those using it defensively. An organisation optimising for headcount reduction will show flat or declining Zones Entered over time. An organisation using the hybrid model as an innovation engine will show the opposite.

None of these are fully formed. They are directions, not definitions — the starting point for a conversation the industry has not yet had in earnest. The frameworks that will eventually replace Scrum and Flow at the small-team end of the spectrum will be built by practitioners, refined through failure, and documented after the fact. That is how it always works. The contribution of this article is not to propose those frameworks prematurely — it is to argue that the conversation needs to start, that the old units are actively misleading the decisions being made today, and that the teams already operating without them are defining what comes next.

Here’s where I want to be honest rather than reassuring — and where I think most of the industry conversation is getting the framing badly wrong.

The dominant narrative is subtraction: smaller teams, lower headcount costs, leaner org structures. That framing is not wrong, but it is dangerously incomplete. It looks at the new capacity and asks how do I save money? That is the wrong question — and asking it while your competitors ask the right one is how you lose.

The right question is: how do I innovate faster with the capacity and velocity I’ve just been handed?

The lowered barrier doesn’t just benefit incumbents. It benefits everyone — including the challengers who couldn’t previously afford to enter your market. In Geoffrey Moore’s terms [2], the same dynamic that lets an established engineering org deliver more with a 2+4 team also lets a two-person startup build what used to require a Series A. The cost of crossing the chasm just dropped. The cost of riding the tornado just dropped. New zones of innovation are opening faster than the cadence incumbents are used to defending against.

This is the competitive dynamic that changes everything. Zone holders — the companies that currently own performance zone products, that have established market positions and loyal customer bases — now face disruption at a pace that has no historical precedent. The window between “a challenger enters with a novel approach” and “that challenger has enterprise-grade product” has compressed from years to months. The 2+4 team on the attacking side is not constrained by the org structures, the Scrum ceremonies, the Flow Load limits, or the hiring cycles of the defender.

The response cannot be to use the new capacity to run leaner. That is playing defence with an offensive weapon. The response has to be to use the gained velocity to innovate faster — to explore zones you couldn’t afford to explore before, to ship experiments that previously required a funded team, to move at the pace of the challengers rather than the pace of the enterprise.

The leaders who understand this will use the 2+4 model to expand surface area, place more bets, and accelerate into new zones before challengers can establish footholds. The leaders who don’t will optimise their way into irrelevance — running tighter, leaner, and slower than the market they’re trying to protect.

If you don’t adapt, the new reality will come at you faster than you can blink. Not because the technology is moving fast — though it is — but because the barriers that used to give you time to respond are gone.

What This Actually Requires: The Team

Two things that aren’t obvious from the outside.

First: trust calibration. You have to develop an accurate model of what agents are good at and where they’ll lead you confidently off a cliff. That model takes time to build and it requires you to be burned a few times. The engineers who are most dangerous with AI tools are the ones who haven’t been burned yet — who take the first plausible answer as the correct one.

Second: intellectual ownership. Agents can generate. They can synthesise, propose, implement. What they can’t do is care. The human has to hold the vision, the quality bar, the commitment to getting it right. When that ownership is missing, the output is plausible but hollow — technically functional, architecturally sound on the surface, but lacking the coherence that comes from someone who actually gives a damn about the whole thing.

Where This Leaves Us

The units we inherited — headcount, velocity, story points, sprint cadence — were never measuring what we thought they were measuring. They were measuring the cost of human coordination. That cost is dropping. The units are becoming noise.

What replaces them is not yet agreed. The frameworks that will define the next era of software team design are being written right now, in practice, by teams that have stopped waiting for consensus. Some of those teams will define the shape of what comes next. Most organisations will copy what appears to have worked, years later, with incomplete understanding. A few will optimise the wrong metrics for too long and find themselves on the wrong side of a transition they could see coming.

The hybrid human-agent team is not the destination. It is where we are now — a transitional form, already more capable than the model it is replacing, not yet fully understood by the industry that is adopting it. The + operator has changed the team. The question of how to measure, manage, and scale that team remains open.

That is not a reason to wait. It is a reason to move.

What This Actually Requires: The Enterprise

History is consistent about what happens at moments like this one.

Dr. Mik Kersten’s Project to Product and Geoffrey Moore’s Zone to Win are partly books about frameworks — but they are also collections of stories about failure. Nokia, measuring the wrong things while the smartphone era arrived. Microsoft under Ballmer, optimising an existing model while the cloud redefined the industry beneath it. In each case, the organisations were not standing still. They were running transformation programmes, measuring progress, reporting upward. They were doing what looked like the right things by the metrics they had inherited. The problem was the metrics were built for a world that no longer existed.

This moment has the same structure. The organisations that respond by using AI agents to run leaner — fewer engineers, tighter headcount, lower cost base — will report efficiency gains for a few quarters and miss the strategic window entirely. They will be optimising a model that is being replaced, with tools that could have been used to do the replacing.

What the early movers do differently is not mysterious, but it is uncommon. They treat the new capacity as an offensive capability rather than a cost lever. They fund experiments that were previously unfundable. They move into adjacent zones before challengers establish footholds. They compress the time between idea and shipped product until their rate of learning matches or exceeds the rate of market change.

We have seen this pattern play out before. A small number of organisations go early, move fast, and in doing so define what the new model looks like. Spotify didn’t invent agile squad structures in isolation — but their public articulation of how they operated became the template that the rest of the industry spent years copying, with varying degrees of success. The Scrum adoption curve followed the same pattern: early movers shaped the practice, the mainstream adopted it years later with mixed results, and a long tail of organisations cargo-culted the ceremonies without understanding the underlying problem they were solving.

The same curve is forming now. A cohort of leaders is already running hybrid human-agent teams, already compressing delivery timelines, already asking what they can build rather than what they can save. They are not waiting for the frameworks to catch up, because the frameworks are always written after the fact.

What follows is predictable. The early movers define the shape of the new model. The mainstream copies what appears to have worked, with varying depth of understanding. Some organisations adapt late but successfully, finding their version of the model in time to stay competitive. Others optimise the wrong metric for too long, discover the gap too late, and either contract to a defensible niche or disappear. Every major technology transition produces all four outcomes. The question for any given organisation is not whether this transition is happening — it is which outcome they are currently tracking toward, and whether anyone in a position to change it is paying attention.

The team is two people and four agents. It’s not smaller. It’s different — in the work it can take on, the speed at which it moves, the relationship each person has with what they’re building.

The question isn’t whether this model will become normal. It already is, for the people who’ve found it. The question is what it means for how we hire, how we develop engineers, how we structure organisations, and what we decide is worth building with the capacity we’ve just unlocked.

That last question — what’s worth building — is still entirely human.

References

[1] Anthropic, How AI helps break the cost barrier to COBOL modernization, February 2026. https://claude.com/blog/how-ai-helps-break-cost-barrier-cobol-modernization

[2] Geoffrey Moore, Zone to Win: Organizing to Compete in an Age of Disruption, Diversion Books, 2015.

[3] Scrum Guide 2020, Schwaber & Sutherland. https://scrumguides.org/scrum-guide.html

[4] Jeff Sutherland, Scrum: Keep Team Size Under 7, 2003. Referenced in Scrum.org forums.

[5] Toptal, Too Big to Scale: A Guide to Optimal Scrum Team Size. https://www.toptal.com/product-managers/agile/scrum-team-size

[6] Agile Pain Relief Consulting, What is the Recommended Scrum Team Size?, 2020. https://agilepainrelief.com/blog/scrum-team-size/

[7] Dr. Mik Kersten, Project to Product: How to Survive and Thrive in the Age of Digital Disruption with the Flow Framework, IT Revolution Press, 2018.

[8] George A. Miller, The Magical Number Seven, Plus or Minus Two: Some Limits on Our Capacity for Processing Information, Psychological Review, 1956. Cowan’s revision to ~4 chunks: N. Cowan, The magical number 4 in short-term memory, Behavioral and Brain Sciences, 2001.

[9] Jensen Huang, Computex keynote, Taipei, May 2023. Reported by CNBC: https://www.cnbc.com/2023/05/30/everyone-is-a-programmer-with-generative-ai-nvidia-ceo-.html

[10] Carta data cited in FourWeekMBA, Solo Founders Rise from 23.7% to 36.3%: AI Tools Enable the One-Person Startup, January 2026. https://fourweekmba.com/solo-founders-rise-from-23-7-to-36-3-ai-tools-enable-the-one-person-startup/