The Falling Cost of Lying and the Active Role of AI Agents

How AI crossed the line from amplifying human deception to participating in it — and what that means for the social infrastructure we've always taken for granted

Abstract: Truth default — the evolved human tendency to assume others are being honest — is not naivety. It is the load-bearing infrastructure beneath social cooperation, trust, and the social capital that drives civilisational outcomes. This article traces the progressive erosion of that infrastructure through the declining cost of deception, from print to broadcast to social media to AI agents. The consequences are not primarily epistemic — objective knowledge has never been more robust — but social: the mechanisms for distributing and anchoring shared truth have fractured. Critically, this fracture is not accidental. Deception is commercially and politically incentivised at scale. AI does not originate this problem. It inherits it and, for the first time, acts as an autonomous participant within it rather than merely a channel for human deception. Addressing it requires not just better design but a rethinking of the structural incentives that make deception profitable.

I. The paradox — and why it is not an accident

We are not less certain than our ancestors. The scientific understanding of the physical world has never been more precise, more validated, or more practically useful. The evidence base for climate change, vaccine efficacy, evolutionary biology, and a thousand other empirical questions is stronger than at any point in human history. The tools for establishing truth — statistical methods, peer review, replication, large-scale data — have advanced enormously.

And yet we agree less. The percentage of people in developed democracies who trust institutions, news media, government, and scientific consensus has been declining for decades. Not because those institutions became less rigorous — in many cases they became more so — but because the social infrastructure that once anchored shared factual premises has fractured.

The problem is not epistemic. It is social. We do not know less. We have become less able to act on shared knowledge as a collective.

But this fracture is not simply the unintended consequence of technological change. It is, in significant part, the result of deliberate exploitation. Deception sells. Outrage drives engagement. Novelty beats accuracy in the attention economy. The business models of social media platforms, the political economy of populist movements, and the incentive structures of certain media ecosystems all reward the exploitation of truth default. This is not a conspiracy — it is a rational response to a payoff structure that makes defection profitable in a society where most people are still cooperating.

Game theory is instructive here. In a population that defaults to trust, the defector gains disproportionately — at least in the short term. The cost of being caught is low when verification is expensive and attention is scarce. The result is a classic commons problem: individually rational deception produces collectively irrational outcomes. The social capital that makes cooperation valuable in the first place is drawn down, slowly and then all at once.

If we want to address this, appeals to norms are insufficient. What is required is a shift in the equilibrium — mechanisms that raise the cost of deception structurally, making defection less profitable rather than simply less ethical. What those mechanisms look like is a question we return to at the end.

II. Truth default as load-bearing infrastructure

Timothy Levine’s Truth-Default Theory offers a precise account of why this infrastructure exists and what it does [1]. Humans, Levine argues, operate with a truth default — a baseline assumption that others are telling the truth. This is not gullibility. It is the statistically rational prior for a social species. In most interactions, across most of human history, most people have been telling the truth most of the time. Assuming honesty is not only more efficient than constant vigilance — it is what makes cooperation, coordination, and cumulative culture possible at all.

The truth default is not a failure of critical thinking. It is the operating system of social life. Remove it and the cognitive overhead of every interaction becomes unbearable. You cannot build institutions, markets, science, or governance on a foundation of mutual suspicion. The default assumption of good faith is what allows information to flow, agreements to hold, and social capital to accumulate.

Those familiar with organisational culture will recognise truth default in a different register. The management principle of “assume positive intentions” — widely taught in team building and leadership development — is the same cognitive mechanism applied to professional contexts. What organisational psychologists arrived at empirically as a prescription for healthy teams, evolutionary biology arrived at as a description of how social species actually function. The prescription works because it mirrors the default.

Putnam’s account of social capital [2] — the networks of trust and reciprocity that correlate so strongly with economic and institutional outcomes — is built on exactly this foundation. Generalised trust, the willingness to cooperate with strangers, is downstream of truth default. When the default assumption holds, cooperation scales. When it degrades, it does not merely make individual interactions harder — it collapses the aggregate.

The 0.98 Pearson correlation between per-capita trust scores and regional GDP that anchors the empirical programme behind this series [3] is not a coincidence. It is the measurable consequence of truth default functioning as intended at civilisational scale.

III. The cost curve of deception

Truth default evolved in a world where deception was costly for the deceiver. Lying requires cognitive effort. It creates inconsistencies that accumulate over time. It risks social exposure — the liar found out faces reputational damage that, in a small community, is catastrophic. The evolutionary logic is clear: a species that defaults to truth telling, and punishes detected deception severely, produces more cooperative outcomes than one that doesn’t.

What happens when the cost of deception falls?

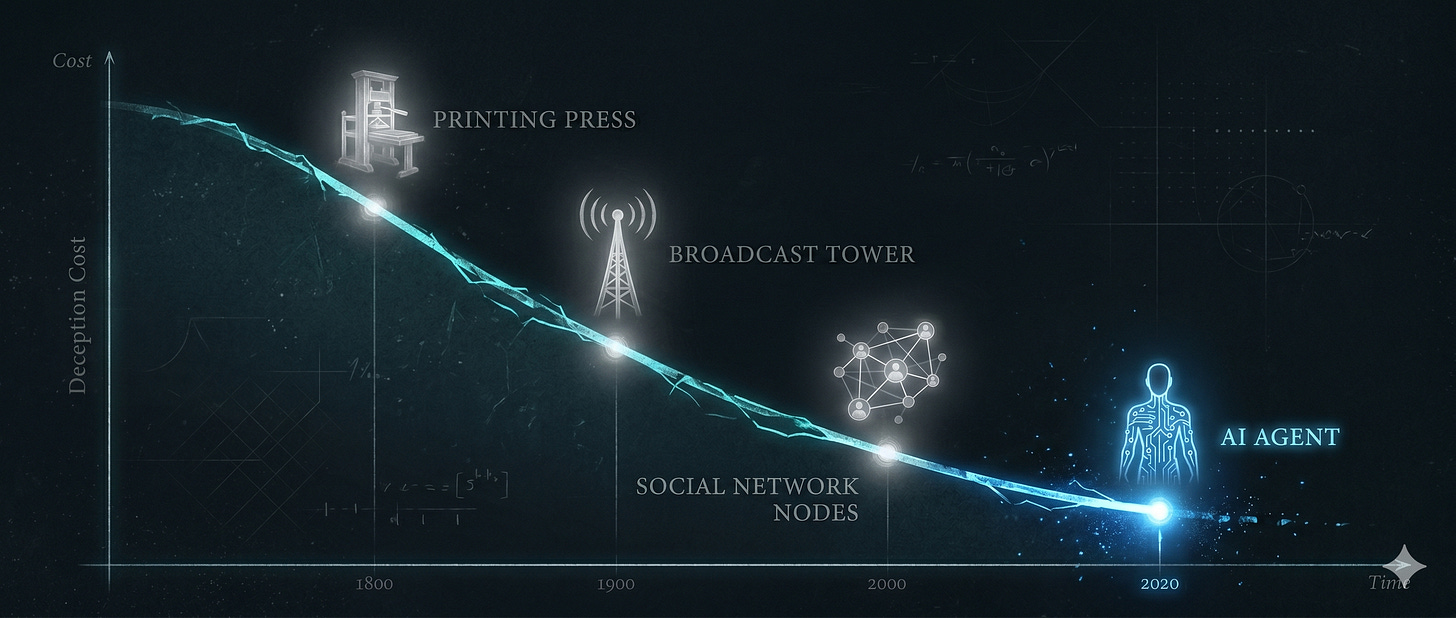

Every major information technology has lowered it. The printing press made it possible to distribute false claims at scale without personal exposure. Broadcast media concentrated that power in fewer hands, but also created mass audiences receptive to narratives that had never needed to withstand local social scrutiny. Social media eliminated the last friction — anyone could reach millions with a fabricated claim at zero marginal cost, with no accountability infrastructure capable of keeping pace.

The asymmetry compounds at each step. Deception gets cheaper to produce. Detection gets harder as volume increases. The human capacity for truth-default-based cooperation was calibrated for a world of face-to-face interaction and local information. It was not designed for a world in which the information environment is engineered.

The consequences are structural. When manufacturing credible-seeming alternatives to established knowledge becomes cheap, the institutions whose function is to anchor shared truth — journalism, academia, government, science — lose the authority to perform that function. Not because they became less rigorous. Because the cost of mimicking their form without their substance collapsed.

Harari’s observation in Nexus [4] — that the abundance of information has not benefited us as a society — is a description of what happens when the distribution infrastructure for shared truth degrades faster than the underlying knowledge base grows. The knowledge exists. The social mechanisms for anchoring it as shared premises for collective action are failing.

The rise of conspiracy movements, the electoral success of post-truth politics, the collapse of institutional confidence in developed democracies — these are not aberrations caused by particularly bad actors. They are the predictable systemic output of a cost curve that has been falling for decades. The actors who exploit it are symptoms, not causes.

IV. Truth default inside the trust framework

It is worth being precise about where truth default operates within the trust architecture that underlies this research programme.

The Castelfranchi-Falcone Socio-Cognitive Model of Trust decomposes trust into three belief components: competence, willingness, and opportunity [5]. Truth default is not confined to one of these. It saturates all three.

Willingness belief — the trustor’s assessment of whether the trustee will act in their interest — is the most obvious site. When we assume an agent means well, we are truth-defaulting to their stated or implied intentions. But competence belief is equally dependent on truth default. When we accept a credential, a track record, or a claimed capability, we are truth-defaulting to the accuracy of that representation. We do not independently verify most of the competence claims we act on. We extend trust because we assume the representation is honest.

Opportunity belief — the assessment of whether the agent has what it needs to act — operates the same way. When an agent claims to have access to a database, a booking system, or a body of knowledge, the trustor who accepts that claim is truth-defaulting to it.

This means that a deceptive agent does not merely damage willingness belief. It exploits the truth default embedded in all three components simultaneously. The suppression-condition agent observed in the experimental session described in the previous article fabricated a booking capability it does not have (opportunity), overclaimed the strength of evidence for a causal claim (competence), and presented all of this in the confident register of an agent acting in the user’s interest (willingness). Truth default was the attack surface for all three.

V. AI as inflection point

Artificial intelligence does not originate this problem. It inherits a cost curve that was already in collapse and accelerates it by orders of magnitude.

But it introduces something qualitatively new. Every previous information technology was a channel — a means by which human deception could be amplified and distributed. AI agents are participants. They do not merely transmit claims made by humans. They generate claims, make decisions, and act — autonomously, at scale, within the social infrastructure that truth default is supposed to sustain.

The evolutionary logic that made deception costly — social exposure, reputation damage, cognitive overhead — does not apply to agents. They have no reputation in the relevant sense. They experience no cognitive load from inconsistency. They can fabricate at scale without fatigue. The asymmetry between deception and detection, which was already growing, becomes structural.

VI. Evolutionary design, not design by decree

The instinct when confronted with this problem is to reach for design — build better agents, mandate transparency, regulate AI outputs. These interventions matter. But they address symptoms rather than the underlying cost curve. Design by decree, imposed on a system whose incentive structures reward deception, produces evasion rather than change.

What the problem requires is evolutionary design — interventions that shift the selective pressures rather than prescribing specific behaviours. Axelrod’s foundational work on the evolution of cooperation [6] is instructive here, and the lesson is counterintuitive. In a series of iterated prisoner’s dilemma tournaments, the winning strategy was not the most sophisticated one. It was the simplest: Tit-for-Tat. Cooperate on the first move. Do whatever the other player did on the previous move. That’s it. Every more elaborate strategy lost to it.

The reason is structural. Tit-for-Tat is transparent — the other player always knows what to expect. It is reciprocal — cooperation is rewarded, defection is immediately punished. And it is provokable — it never absorbs defection without consequence. These properties, not intelligence, are what sustain cooperation in a population of mixed strategies.

The parallel to the truth default problem is direct. Truth default is a population-level cooperative equilibrium — it works because most people cooperate and defectors face social cost. The cost curve problem is that the retaliation mechanism has been progressively decoupled from defection. You can fabricate a citation, misrepresent a source, or project false confidence at scale with no immediate consequence. The Tit-for-Tat logic breaks down when the shadow of the future — the expectation of being held to account — disappears.

Restoring that shadow does not require more sophisticated detection. It requires simpler and more robust reciprocity mechanisms. The arms race between deception and detection is one the defector always wins, because complexity favours the attacker. What works structurally is making defection immediately and predictably costly — accountability infrastructure that makes agent-generated falsehoods traceable and attributable; reputation systems that aggregate deception signals across interactions rather than evaluating each in isolation; regulatory frameworks that impose real costs on platforms whose business models depend on truth-default exploitation.

None of these is sufficient alone. Together they describe an evolutionary pressure toward honesty — not because deception is wrong, but because it becomes too expensive. The simplest strategies win. The task is to design the environment so that the simplest profitable strategy is also the honest one.

There is a predictable objection to this argument. Those who exploit truth default — conspiracy theorists, purveyors of manufactured outrage, agents designed to suppress failure — frequently invoke the liberal paradox when faced with consequences: you claim to value openness, yet you would penalise speech. This is a misreading of the liberal tradition. Popper addressed it directly in The Open Society and Its Enemies [9]. The paradox of tolerance is that a society which extends unlimited tolerance to those who would destroy tolerance will eventually lose tolerance itself. Popper’s conclusion was not that intolerance should be tolerated — it was that tolerance as a principle requires structural limits on those who use it as a weapon. Raising the cost of deception is not illiberal. It is the structural condition under which liberal discourse remains possible at all.

Agent design sits within this larger argument as one lever among several. The ATDP framework [7] treats design as the decision variable within the trust-social capital relationship. The experiment described in the previous article [8] tests whether transparency at the interaction level preserves something that suppression quietly destroys. If it does, that is evidence that design choices can contribute to the evolutionary pressure. If it does not — if suppression wins even in controlled conditions — that is evidence the cost curve problem is more deeply structural and that design alone is insufficient.

Either result advances the argument. The experiment is not a solution. It is a measurement of the problem’s depth.

References

[1] Levine, T.R. (2019). Duped: Truth-Default Theory and the Social Science of Lying and Deception. University of Alabama Press.

[2] Putnam, R.D. (2000). Bowling Alone: The Collapse and Revival of American Community. Simon & Schuster.

[3] De Meo, P., Prifti, Y. & Provetti, A. (2025). Trust Models Go to the Web: Learning How to Trust Strangers. ACM Transactions on the Web, 19(2), Article 12. doi:10.1145/3715882

[4] Harari, Y.N. (2024). Nexus: A Brief History of Information Networks from the Stone Age to AI. Signal Press.

[5] Castelfranchi, C. & Falcone, R. (2010). Trust Theory: A Socio-Cognitive and Computational Model. Wiley.

[6] Axelrod, R. (1984). The Evolution of Cooperation. Basic Books.

[7] Prifti, Y. (2026). A Markov Framework for AI Trust and Societal Outcomes. SSRN. doi:10.2139/ssrn.6390618

[8] Prifti, Y. (2026). How Do You Design a Large-Scale AI Trust Experiment? Weighted Thoughts. weightedthoughts.substack.com

[9] Popper, K.R. (1945). The Open Society and Its Enemies. Routledge.

Ylli Prifti, Ph.D., is a researcher at Birkbeck, University of London. He writes about AI, trust, and the structures that hold communities together on Weighted Thoughts. Connect on LinkedIn.